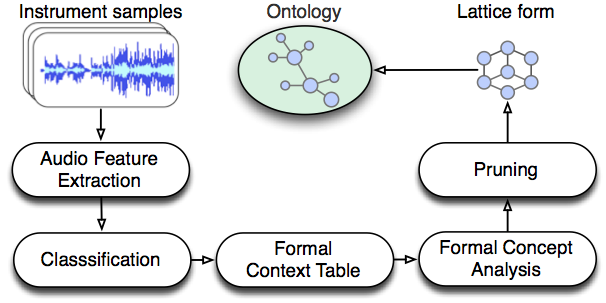

The automatic ontology generation system performs two main tasks: i) musical instrument recognition, and ii) the construction of instrument concept hierarchies. In the first part, the hybrid system uses either a Multi-Layer Perceptron neural network, or Support Vector Machines to model the relationships between instruments (e.g., violin) and their attributes (e.g. bowed) using content-based timbre features. In the second part, the output of the instrument recognition system is processed using Formal Concept Analysis to construct a conceptual hierarchy for musical instruments.

The system is based on a general conceptual analysis approach and can be applied to any research fields that deal with knowledge management issues. For more details regarding the generated OWL files published in [1], see this link. For the complete OWL files generated in the experiment published in [2], see this link.

[1] Sefki Kolozali, Mathieu Barthet, George Fazekas, Mark Sandler. Automatic Ontology Generation for Musical Instruments based on Audio Analysis. IEEE Transactions on Audio, Speech, and Language Processing, 2013.

[2] Sefki Kolozali, George Fazekas, Mathieu Barthet, Mark Sandler. A framework for automatic ontology generation based on semantic audio analysis. In proceedings of the 53rd International Conference of the Audio Engineering Society on Semantic Audio, 2014.